The perennial dispute between naturalism and theism has found renewed public expression recently, symptomatic of a broader return to metaphysical inquiry in Anglophone intellectual culture, one notably more pronounced than in the secular traditions of Western Europe. The present article takes this contemporary controversy as its occasion but not its object: rather than commenting on the debate's cultural symptoms, it descends to its philosophical foundations, examining the strongest arguments on both sides from a position of deliberate symmetry. Theism and naturalism begin here on equal footing. Yet this symmetry of exposition does not entail neutrality of conclusion; I proceed explicitly from the perspective of a fair agnostic naturalism. The recurring claim in contemporary philosophy of religion that science presupposes intelligibility and therefore requires a transcendent ontological ground is more rhetorically compelling than it is philosophically conclusive. Classical theism, dressed in Thomistic or neo-Platonic language, presents itself as the only serious answer to the question "why is there ordered reality at all?" But on closer inspection, theism does not answer this question either. It merely relabels the mystery and declares victory.

But the argument that follows is not a defence of scientism either. It accepts that science cannot self-justify its own preconditions from within its methods alone. What it contests is the inference that this epistemic gap requires filling with a personal, necessary being. That inference is a non sequitur and an expensive one. I will examine it from the perspective of a fair agnostic naturalism.

I. The Presupposition Argument and Its Real Force

The strongest version of the theistic challenge runs as follows: science operates by assuming that reality is intelligible, that reason is reliable, and that the uniformity of nature holds. None of these can be demonstrated by science without circularity. Therefore science borrows ontological capital it cannot itself generate. Classical theism, by identifying God with being itself (esse ipsum in Aquinas's formulation), offers a ground for intelligibility that is not an additional hypothesis inside the system but the very condition for any system at all.

"Science does not require revealed religion, scripture, or ecclesial authority but it does require real being, intelligibility, normativity, truth, and real good. If those are denied, science collapses into instrumentalism."

The theistic position under examination

This is genuinely, to me, the strongest version of the argument and deserves respect. This line of thought has deep roots. It echoes Alvin Plantinga’s influential evolutionary argument against naturalism (the worry that unguided evolution would undermine our confidence in reason itself), C.S. Lewis’s classic argument from reason, and, much earlier, Leibniz’s principle of sufficient reason, the demand that everything must ultimately have an explanation. The intuition that a merely contingent universe cannot account for its own rational transparency is not trivially dismissed.

And yet, I think, it fails, not because the intuition is wrong, but because the proposed solution repeats the problem at a higher level of abstraction, while introducing additional difficulties of its own.

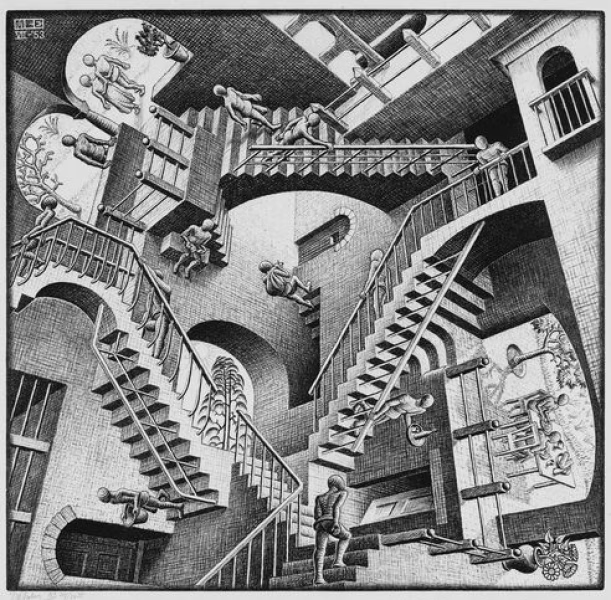

II. The Symmetry Problem: Brute Facts All the Way Down

The decisive objection, it seems to me, lies in what we might call the symmetry of explanatory termination, in other words, both naturalism and classical theism eventually reach a point where they must simply say this is how reality fundamentally is, with no further explanation available.. Both naturalism and classical theism ultimately terminate explanation in a brute, ungrounded fact. Naturalism terminates in a self-consistent, structured universe, ordered, law-like, and intelligible as a fundamental feature. Theism terminates in a God whose nature is necessarily rational, necessarily good, and necessarily existent. The real question, then, and one I have returned to repeatedly, is simply which stopping-point is more parsimonious.

If naturalism owes an explanation for why reality is intelligible, theism equally owes an explanation for why God has this particular nature, rational, unified, benevolent, rather than another. The classical theist responds that God exists necessarily and could not be otherwise. But this is simply assigning "necessary existence" as a predicate, a move Kant already identified as question-begging in his critique of the ontological argument. You cannot define something into necessity. Both positions hit a wall. Naturalism says: structured reality is the wall. Theism says: divine nature is the wall. Neither escapes brute facticity. The question is only which wall is more parsimonious.

David Hume made a closely related point in the Dialogues Concerning Natural Religion: if it is conceivable that a necessarily existent God could account for the universe, it is equally conceivable that the universe itself has the character of necessary existence, removing the middleman entirely. Bertrand Russell pressed exactly this point in his 1948 debate with Frederick Copleston: "The universe is just there, and that's all."

III. The Contingency Asymmetry: Does "Necessary Being" Escape the Wall?

A sophisticated theist will rightly press a deeper objection here: the symmetry argument, they say, obscures a crucial distinction. Naturalism’s brute fact is contingent; theism’s is necessary. These walls are not the same height. A contingent stopping point is less satisfying than a necessary one because it leaves open the further question of why this contingent thing rather than nothing. In the confrontation with classical theism, this one of the strongest objection. Bu the objection itself suffers three weaknesses that I will address by increasing order of strength.

First, the concept of "necessary existence" as applied to God is less coherent than it appears. What would it mean for a being to exist necessarily? In Kripkean modal logic, necessary truths are those true in all possible worlds. But the claim that God exists in all possible worlds is not a logical truth; it is itself a substantive metaphysical assertion that requires independent support. Asserting it does not establish it. The classical theist has not shown that divine necessary existence is coherent, they have simply declared it. Until the coherence of necessary being is independently demonstrated, the appeal to it as an asymmetric advantage over naturalism's contingent starting point begs the very question at issue.

Second, and more fundamentally, even granting that necessary existence is coherent and that God possesses it, the theist has not explained why a necessary being would have the particular rational and benevolent nature required to underwrite scientific intelligibility. The necessity of God's existence does not entail the necessity of God's specific nature. One could conceive of a necessarily existent being that is not rational, not unified, not truth-conferring, a necessary chaos, or a necessary indifferent ground. Leibniz himself recognized this difficulty: the Principle of Sufficient Reason requires not just a necessary being but a perfectly rational and good one. But the goodness and rationality of that being are themselves further attributes requiring explanation, or alternatively, further brute axioms. The contingency asymmetry, even when granted, does not close the explanatory gap; it merely relocates it once more.

Third, the naturalist is entitled to respond to the Humean move with more philosophical precision: if "necessary existence" is a coherent predicate, there is no principled reason it cannot apply to the universe or to its fundamental laws. Some contemporary physicists have speculated, not unreasonably, that the laws of physics may be necessary given deeper mathematical structures, that physical reality may be the unique self-consistent structure that exists. This is admittedly speculative. But it is no more speculative than asserting divine necessary existence, and it requires no additional personal attributes. The naturalist does not claim to have established this; the point is that the theist has not established the asymmetry either.

IV. The Evolutionary Argument Against Naturalism : A Closer Engagement

Plantinga's Evolutionary Argument Against Naturalism presents the most technically demanding challenge. The argument holds that if naturalism is true and our cognitive faculties are the product of evolution selected for survival rather than truth-tracking, then we have defeaters for the reliability of those very faculties, including our belief in naturalism. Naturalism thus either defeats itself, or requires an additional premise to secure cognitive reliability. Classical theism, by contrast, provides a truth-conferring designer whose intentions guarantee reliable cognition.

The argument is ingenious. A careful response requires three distinct moves.

First, the EAAN depends on a contested assumption: that survival fitness and truth-tracking are sufficiently decoupled under naturalism that the probability of reliable cognition is low or inscrutable. But this assumption is questionable. There are strong functional reasons to expect that evolution selects for broadly truth-tracking cognitive faculties, not merely adaptively useful illusions. An organism that systematically misrepresents the causal structure of its environment, mistaking predators for food, or failing to form accurate models of spatial relationships, will be selected against. The decoupling of fitness and truth-tracking is not the default naturalistic prediction; it requires special argument, which Plantinga provides but which remains genuinely contested in the literature.

Second, and more decisively, theism faces an exactly analogous problem that is rarely stated with sufficient force. The argument that a good God guarantees reliable cognition is only valid if we already know that: (a) God exists; (b) God is good; (c) God intended our faculties to track truth; and (d) God's implementation was competent. Each of these premises either requires its own justification or constitutes an additional brute axiom. The argument is not merely circular in the informal sense, it is formally so: you cannot use the reliability of reason to establish theism, and then use theism to guarantee the reliability of reason, without begging the question. Plantinga acknowledges this and offers the ontological argument as an independent route to (a), but that route faces Kant's objection. The theistic resolution of the EAAN is no less question-begging than the naturalistic one, it simply buries the circularity at a greater depth of abstraction.

Third, and most practically, the inductive track record of human reasoning constitutes genuine, if defeasible, evidence for its reliability that does not depend on prior metaphysical resolution. We have accumulated cumulative evidence, from technology, medicine, engineering, and predictive science, that our cognitive faculties track features of the world reliably enough for a vast range of purposes. This evidence is not self-validating in a purely logical sense, but it is the same kind of evidence we use for every other empirical claim. The demand for a non-circular metaphysical guarantee of cognitive reliability, before any empirical inquiry can proceed, sets an epistemic standard that no framework, naturalism or theism, can meet. The appropriate response, it seems to me, is not to search for such a guarantee but to recognise that the demand itself is excessive.

V. The Modal Cosmological Argument : Leibniz, Pruss and the Principle of Sufficient Reason

A particularly sophisticated contemporary version of the cosmological argument has been advanced by philosophers Alexander Pruss and Robert Koons. Their approach sets aside Thomistic metaphysics and focuses instead on a strong version of the Principle of Sufficient Reason : the idea that every contingent fact must have an explanation. The argument then proceeds as follows: the universe as a whole, if contingent, requires an explanation that cannot itself be contingent; therefore there must be a necessary being providing that explanation. This version deliberately sidesteps the brute-fact objection by locating necessity in the explanatory terminus itself.

The PSR itself requires justification. Why should every contingent fact have a sufficient reason? This is not a logical truth, it is not self-contradictory to imagine contingent facts without sufficient reasons. Leibniz regarded the PSR as a fundamental metaphysical principle, but asserting its status as fundamental is precisely what is at issue. If the theist invokes the PSR as a brute foundational commitment, they have simply relocated the brute fact to a different level: the principle, rather than the entity, becomes the ungrounded axiom. If they attempt to derive the PSR from something else, that derivation either succeeds (in which case the PSR is not fundamental) or requires its own foundation (in which case the regress continues). The PSR cannot simultaneously be a brute axiom and a principle that eliminates brute axioms.

Even granting the PSR, a critical ambiguity infects its application. The PSR, in its standard formulation, requires that every contingent fact have an explanation. But the explanation of a contingent fact can itself be contingent, provided it is explained by a further fact, and so on. The inference from "contingent reality requires explanation" to "therefore a necessary being exists" requires a stronger claim: that the regress of contingent explanations must terminate. This is the cosmological argument's key non-obvious premise. Pruss and Koons provide sophisticated defences of it, but they depend on further contested metaphysical principles (such as the impossibility of infinite causal regresses or the principle of recombination). These are not established logical truths; they are contestable positions within metaphysics. The modal cosmological argument is not a proof, it is a sophisticated inference whose force depends entirely on which additional metaphysical principles one is prepared to accept.

Even granting the PSR and the termination of the regress in a necessary being, the theist faces what we might call the content problem: nothing in the argument establishes that the necessary being has any of the attributes required for the grounding of intelligibility, rationality, goodness, personhood. A necessary being could be a bare mathematical structure, a mindless ground of being, or a chaotic substratum. The additional step from "necessary being" to "classical theism" requires extensive further argument, cosmological arguments for God's power, ontological arguments for God's goodness, and so forth. Each introduces new premises, each contestable. The cumulative case begins to resemble a house of cards: impressive in construction, fragile under pressure.

VI. Divine Simplicity and the Parsimony Claim

The invocation of Occam's razor was too breezy at a critical point. The Thomistic theist will argue that God is not a complex entity at all. On this view God is pure act, a technical term meaning that God’s essence and existence are identical, with no unrealised potentialities (no potency) and no internal parts. There are, strictly speaking, no properties in God over and above the divine essence itself. On this understanding, classical theism begins not with a complicated being but with something radically simple, perhaps simpler, the theist claims, than a physical universe containing multiple fundamental constants and forces. The parsimony objection, the theist argues, runs in the wrong direction.

The doctrine of divine simplicity is itself philosophically contested to a degree that makes it unavailable as a straightforward parsimony argument. If God has no real distinction between, say, omniscience and omnipotence, if these are literally identical in God, then a range of logical difficulties follows. Christopher Hughes and Alvin Plantinga have independently argued that divine simplicity, taken strictly, generates contradictions or at minimum requires such a radical revision of predication that it is unclear what the doctrine is even claiming. A supposedly simple entity that is simultaneously the fullness of power, knowledge, goodness, and necessary existence, while having no real internal distinctions, strains the concept of simplicity past the breaking point. Theological simplicity of this kind is not the parsimony of Occam's razor; it is simplicity as a defined term of art within a particular metaphysical framework.

Even if divine simplicity is coherent, the comparison with naturalism is not between "one simple God" and "a complex universe." It is between "one claimed simple God, plus the relationship between God and the universe, plus the explanatory structure of why God creates this universe rather than another, plus the mechanism by which an immaterial will produces material reality", and "a structured physical reality." Once all the explanatory machinery required by classical theism is placed on the scale, the parsimony case for theism becomes significantly harder to sustain. Elliott Sober's formulation of parsimony as inference to the best explanation requires not just fewer entities but fewer unverified explanatory posits. On that broader criterion, naturalism retains its advantage.

VII. Gödel's Incompleteness and the Symmetry of Epistemic Humility

The theistic deployment of Gödel's incompleteness theorems in support of the presupposition argument merits sustained attention, as it continues to appear in sophisticated apologetics. The argument holds: if formal systems cannot close upon themselves, this demonstrates that reality requires an external ground, one that is not itself a formal system.

But this reading is precisely backwards, or at minimum, symmetrical in a way the theist cannot exploit. Gödel's first incompleteness theorem (1931) establishes that any consistent formal system capable of expressing elementary arithmetic contains true statements that cannot be proven within that system. This is a profound result, with genuine implications for the philosophy of mathematics and mind. But it does not point toward God : it points toward structural limits on all closed explanatory systems, including metaphysical ones.

If Gödel's lesson is that systems cannot self-ground, classical theism is not exempt. The theistic system (divine omniscience, omnipotence, necessary existence, perfect goodness) is itself a formal or semi-formal structure making claims about all possible states of affairs. If that structure is rich enough to do the explanatory work required of it, Gödelian humility applies to it as well. The divine nature, taken as the axiom set of the theistic system, will contain truths it cannot prove from within. The theorem counsels epistemic humility toward all explanatory frameworks, naturalistic and theological alike. It is not a ladder that leads to God; it is a reminder that no such ladder reaches its destination without resting on something it cannot itself justify.

It is worth adding that Gödel's theorems apply to formal systems, not to reality itself. The inference from "formal systems have unprovable truths" to "reality requires an external metaphysical ground" involves a category error : treating physical or metaphysical reality as if it were a formal deductive system. The universe is not a theorem-proving machine, and its intelligibility does not depend on it being provably complete.

VIII. Naturalized Epistemology : Methodological Circularity and Reflective Equilibrium

The Quinean naturalized epistemology was vulnerable to the charge of question-begging: if the theist asks whether science's foundations are grounded, responding that we should simply accept science's picture without external grounding is not an argument, it is the naturalist's conclusion stated as a premise. This objection requires a more careful response.

The naturalist's position, properly understood, does not claim to escape the need for foundational commitments. It claims, more modestly, that the appropriate epistemic methodology is not the demand for a non-circular external foundation, which no framework can satisfy, but the coherence and reflective equilibrium of one's overall web of beliefs. On this view, we begin from the middle, from the cognitive and perceptual capacities we actually have, and revise our beliefs in light of their mutual coherence and their friction with experience. There is no view from nowhere; there is only the ongoing project of making our beliefs more coherent, more consistent, and more empirically adequate.

This is not viciously circular. It is the recognition that foundationalism, the demand that all knowledge rest on absolutely certain, non-inferential foundations, has failed as an epistemological programme, for reasons independent of the theism debate. The theist who demands a non-circular justification for naturalism faces exactly the same foundationalist regress. Even if God grounds cognitive reliability, our access to this fact is through the very faculties whose reliability is at issue. The theist cannot step outside cognition to verify the divine guarantee. Both frameworks operate, ultimately, within the circle of human understanding; what differentiates them is not the escape from circularity, neither achieves that, but the relative coherence, parsimony, and productivity of the overall picture.

Quine's naturalized epistemology argues not that science is self-vindicating by fiat, but that the question "is science reliable?" is itself a scientific question, answerable by the best scientific and philosophical methods we have. This is a principled methodological choice, one the naturalist can defend as more productive and more honest than the alternative, which introduces a transcendent guarantor who must itself be accessed through the very faculties in question.

IX. The False Binary: Ontological Realism vs. Nihilism

The framing of this debate ("the real divide is ontological realism versus ontological nihilism") is rhetorically effective but philosophically misleading. It presents naturalism as committed to nihilism unless it accepts a transcendent ground, which is a non sequitur. Naturalism, properly understood, is a form of ontological realism. It affirms that there is a mind-independent reality, that it has genuine structure, that truth is correspondence to that structure, and that our evolved cognitive faculties track it reliably enough for science to work. What it does not affirm is that this structure requires a personal, intentional underwriter.

The move from "reality is genuinely ordered" to "therefore classical theism" requires several additional premises that the theist provides by fiat rather than argument. Hilary Putnam's internal realism and John Dewey's pragmatic naturalism both demonstrate that one can hold robust commitments to truth, normativity, and rational enquiry without requiring a metaphysical guarantor beyond the natural order itself. The choice is not between God and chaos. It is between two kinds of unexplained starting points, and naturalism's is the leaner one, once the full ontological cost of classical theism is properly assessed.

The Categorical Gap That Isn't

The claim that there is a "categorical gap" between naturalism's acceptance of brute intelligible reality and theism's grounding of it in divine being rests on an asymmetry that does not survive sustained scrutiny. I have engaged five of the strongest theistic objections in their most sophisticated forms:

The parsimony of divine simplicity, on examination, dissolves into either incoherence (if pressed strictly) or a highly contested metaphysical framework (if pressed charitably). The ontological machinery required to connect a simple God to a complex universe is not captured by the bare assertion of simplicity.

The contingency asymmetry (the claim that a necessary being provides a superior stopping point to a contingent one) is undermined by the contested coherence of necessary existence as a predicate, by the content problem (nothing about necessity entails rational benevolence), and by the naturalist's symmetric entitlement to claim modal necessity for the physical order.

The Evolutionary Argument Against Naturalism, despite its ingenuity, is equally damaging to theism: the theistic resolution requires assuming God's existence, goodness, and competence as prior premises, a circularity that matches naturalism's, without being more transparent about it.

The modal cosmological argument, in its Leibnizian form, either renders the PSR itself a brute axiom (undercutting the argument's force), fails to establish that contingent regresses must terminate in a necessary being, or establishes a necessary being whose nature is underdetermined, falling far short of classical theism.

And Gödel's incompleteness theorems, properly understood, counsel humility toward all closed explanatory systems, including the theological one, rather than providing a route to transcendent grounding.

What classical theism adds, a personal, omnipotent, necessarily existent being, is not an explanation of intelligibility. It is intelligibility under a different description, with additional attributes (personhood, will, goodness, simplicity) that themselves require either explanation or brute acceptance, and that multiply unverified ontological posits without corresponding explanatory gain. By any standard reading of theoretical parsimony (from Ockham to Sober) the naturalist position is preferable.

Declaring "no transcendent answer is required" is not evasion. It is the recognition that the demand for such an answer is itself a metaphysical choice, one that naturalism is entitled to decline. The burden of proof rests with the position that introduces the more complex entity, not the one that declines to. And where the introducing position comes trailing five additional contestable premises for every one it claims to resolve, that burden becomes considerably heavier.

The God hypothesis does not close the explanatory gap. It relocates it and charges a high ontological price for the removal service.

-

As an example of this debate in the Anglophone intellectual world, see the interaction between Gad Saad and Jordan Peterson : https://x.com/GadSaad/status/2026700246688973038?s=20 ↩

-

Alvin Plantinga, Where the Conflict Really Lies: Science, Religion, and Naturalism (Oxford University Press, 2011). Plantinga's EAAN remains the most rigorous version of the self-defeat objection to naturalism, and is engaged directly in Section IV above. ↩

-

Thomas Aquinas, Summa Theologiae, I, Q.2--3. The five ways and the doctrine of esse ipsum subsistens. ↩

-

G.W. Leibniz, Principles of Nature and Grace (1714): the originating formulation of the sufficient reason demand. ↩

-

Immanuel Kant, Critique of Pure Reason (1781), "Transcendental Dialectic," on the impossibility of the ontological argument. ↩

-

David Hume, Dialogues Concerning Natural Religion (1779), Part IX --- Cleanthes vs. Demea on necessary existence. ↩

-

Bertrand Russell & Frederick Copleston, BBC Radio Debate on the Existence of God (1948). Transcript widely available. ↩

-

For detailed engagement with the EAAN, see: Alvin Plantinga, Warrant and Proper Function (Oxford University Press, 1993), ch. 12; for critical responses, Michael Tooley in Plantinga, Tooley, Knowledge of God (Blackwell, 2008). ↩

-

Alexander Pruss, The Principle of Sufficient Reason: A Reassessment (Cambridge University Press, 2006); Robert Koons, Realism Regained (Oxford University Press, 2000). The most technically rigorous contemporary defences of the Leibnizian cosmological argument. ↩

-

Elliott Sober, Ockham's Razors: A User's Manual (Cambridge University Press, 2015). Sober's formulation of parsimony as inference to the best explanation is the relevant standard for comparing explanatory frameworks. ↩

-

Kurt Gödel, "Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I," Monatshefte für Mathematik und Physik 38 (1931): 173--198. For the category-error problem in applying Gödel to metaphysics, see Torkel Franzén, Gödel's Theorem: An Incomplete Guide to Its Use and Abuse (A K Peters, 2005). ↩

-

John Rawls, "The Independence of Moral Theory," Proceedings and Addresses of the American Philosophical Association 48 (1974--75): 5--22; on reflective equilibrium as a method. ↩

-

W.V.O. Quine, "Epistemology Naturalized," in Ontological Relativity and Other Essays (Columbia University Press, 1969). ↩

-

John Dewey, Experience and Nature (1925); Hilary Putnam, Realism with a Human Face (Harvard University Press, 1990); Christopher Hughes, On A Complex Theory of a Simple God (Cornell University Press, 1989), for the critique of divine simplicity from within analytic theology. ↩